I’ve been revisiting Bloom’s Taxonomy this week while introducing some undergraduates to the basic idea behind the revised version of it.

Recent critiques (well, some of these are in fact long-standing) of the taxonomy are interesting, to wit:

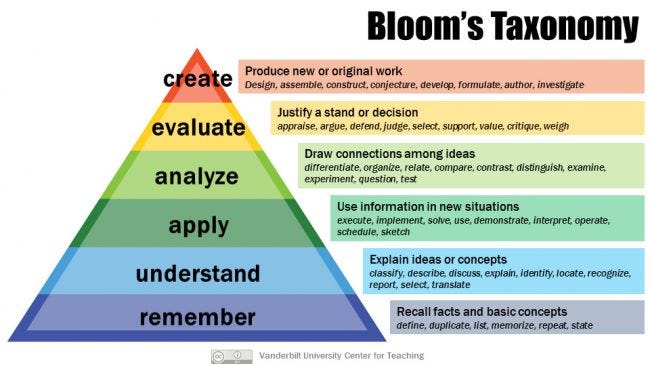

The taxonomy inaccurately describes the different terms as hierarchical, e.g., that “creating” at the top and “knowledge” or “remembering” at the bottom and the inference that “creating” is the highest-order mode for learning that makes use of all the other learning form, whereas “remembering” is just remembering, is “lower”, is “easier”.

The taxonomy inaccurately models the different terms as sequential: first you learn to remember/know information, then you learn to apply, then you learn to analyze, and so on, whereas in many cases these different modes or experiences of learning happen simultaneously.

The taxonomy is old, and while it may have been a generative, useful response to some really dire ideas about learning in behaviorialism, it’s deeply inaccurate as an actual description of the cognitive processes involved in learning, which we understand far better today than we did either when the taxonomy was first proposed or when it was later revised. People just don’t think like the model suggests, and as is often the case, the model has been used by many as if it were an empirical description of cognition.

The taxonomy excludes important parts of the process of learning, like motivation, and it understands learning in largely atomistic, individualized terms, not as social, collaborative or collective.

The taxonomy’s categories aren’t rigorously defined: what’s the difference between “apply” and “analyze”, really?

The taxonomy suggests a universal valuation of different metacognitive skills or modes as if “create” will always be the most important or most useful skill in any given real-world situation when instead it may be of limited value in most tasks, jobs and activities.

I’m not a researcher in educational studies, nor am I vested in the particular forms of cognitive science and social psychology that have reason to demand precision about some of these concepts and claims. However, I’m broadly in sympathy with most of these points as an educational practicioner. Yes, even if these words describe somewhat separable kinds of learning and application of learned skills, even for beginning learners they’re often simultaneous and indistinguishable in how they’re experienced and expressed. Frequently, you succeed in remembering or knowing because you’ve tried to create or analyze—that’s a basic proposition of most courses in higher education, that it’s the repeated attempt to do something that makes knowledge sticky and persistent.

And yet, I don’t want to banish Bloom’s Taxonomy, for two reasons.

The first is that as a model, it still accurately describes the ideology of a lot of teaching in higher education, even by faculty who could describe the technical and epistemological shortcomings of the taxonomy itself or who aspire to hold to some other value or point-of-view. It certainly describes the ideology that governs judgments about the worth of particular scholarship, about hiring faculty, about tenure processes, and about reputational esteem. Bloom’s Taxonomy describes how we think about merit and excellence within and across disciplines. We choose and exalt scholars in part on our perception of whether they dwell in the top of the pyramid most of the time.

I wrote about this issue a long time ago in my career in remarks on the career of the historian David Beach, who wrote about the precolonial history of Zimbabwe. I’d gotten to know him a bit in the time I’d lived there, though I’d originally avoided meeting him. Part of the reason I’d avoided him is that he just knew too damn much about his area of specialized interest—I was frankly scared that he’d start drilling me on my command over 18th Century Shona history, which was minimal when I first started out (and is not especially deep even today). He didn’t, but when he and several other historians working in African universities came to the US to do a speaking tour, they all remarked upon the degree to which the US-based scholars seemed to have an almost-cultist regard for the idea of originality in scholarship and a disdain for just patiently filling in the gaps in our knowledge. That startled me, because I’d just come back from judging a grant competition where all of the judges, including me, had talked dismissively about the applications that were “just gap-filling”.

I suspect we can all think of people at research universities in our fields of specialization who are that one person who knows everything about one thing—hedgehogs, in the Isaiah Berlin parlance—and often the relationship between them and the field is that people depend upon the hedgehog but they don’t exalt them. Often the hedgehog will have no replacement when they retire: the incentives against being a person who knows a lot but isn’t “advancing knowledge” (which is always about originality, creation, critical reappraisal, novel argument) are pretty fierce.

At least some faculty assess the work of undergraduates in terms of what faculty exalt for themselves and in themselves—sometimes that’s conscious, sometimes that’s unconscious. We’re the people who survived rounds of selection by teachers and faculty—sometimes that came relatively late in our lives, sometimes it was from an early point on, sometimes it was despite adversity and discrimination, sometimes it was from underneath a cloak of privilege, but regardless, we ran the particular gauntlet set for people who want an academic career. So the hurdles said on them “create! evaluate! analyze!” and we learned to do that. Then that became the goal we set for our students in turn.

So we have to teach it because students have to know what they’re getting into. And because we need to be aware of our own inclinations.

Which leads to the second reason why I’m not quite ready to let Bloom’s go. Normally when you identify something that’s naturalized and normative as an ideology, you’re supposed to come out with your hands up. That’s what many of the critics of Bloom’s are doing: it’s not how people really learn, it’s not why people really learn, so if we are teachers, we have to teach to how peoples’ minds really work and to the purposes of education at the biggest and widest scale.

But having acknowledged it’s an ideology, I’m willing to be an ideologue, albeit with some reservations.

It’s true that there are many tasks in life—both at work and otherwise—that call upon the learned skills and information that Bloom’s Taxonomy places in its lower levels. It’s also true that denigrating those skills as easy, simple, common, widely distributed, is one of many worms that has turned the apple of meritocracy deeply rotten from the very beginning. Inasmuch as Bloom’s mirrors contemporary justifications of socioeconomic hierarchy, it is deeply tainted. Whether we’re talking a primary care doctor, a car mechanic, a barista, a bus driver or an electrical engineer—or a parent, a sibling, a partner, a friend—we are all of us best served by a person who is remembers all the information that matters and can explain that information clearly.

When do most of us need to create or evaluate? To call back to a previous essay at Eight by Seven, in the face of the unknown and the uncertain. Which is to say therefore, all of us. The bus driver needs to make a quick assessment when there’s a violent-sounding outburst in the back: is it a drunk? a fight between passengers? a person who is ill? The primary care doctor has to sense when there’s a pattern of reported symptoms in relationship to a patient’s history and temperament that shouldn’t be quickly diagnosed as something familiar and common. The family member has to know when there’s something just enough off about another family member that they need to go to the ER to be checked for a minor stroke or other issue.

But this matters. When people are highly knowledgeable and able to explain their knowledge but are not able to create and evaluate, they’re missing something profoundly important. The reverse is true as well, but it’s a different absence. The knowledgeable person who is stubbornly unadaptive (and there are such people) will commit many sins of omission in a lifetime with customers, clients, students, friends and loved ones. The highly creative and adaptive person who doesn’t know much of anything and isn’t good at explaining what they know (and there are such people) will just be a bullshit artist—perhaps an exciting one, perhaps even sometimes a successful one, but always someone who mostly takes and doesn’t really give back.

Much of what we do to meaningfully create and evaluate in this sense in life is not the direct product of a highly concentrated period of learning in an educational institution. It is the emergent outcome of long-established practice combined with a temperamental and disciplined openness to novelty and a curiosity about the world combined with a motivation to do best by other people.

An education can address the last two; the best it can do for the first is be the first rung in a lifetime of practice (including the maintenance of professional and personal sociality). Whether we do what we should to cultivate curiosity and to do well by other people is a different question altogether, but I think that we should see educating for creation and evaluation as our most important (but not exclusive) aspiration.

Why I still want “create” and “evaluate” to be as important as the taxonomy proclaims them to be is because in the end, we are not just educating people to live in the world as it is right now. I do not want an educational system that neatly fits people to the tasks, obligations and roles that we see all around us—and it feels to me as if at least a few of Bloom’s critics are edging towards that in asking that we take what we are and what people do right now as a full map of what we need to service in an education.

We are also educating people into what has yet to be, both our unknown possibilities and our probable failures. “Create” and “evaluate” shouldn’t be limited to a kind of late-capitalist version of romantic individualist originality or a relentless critical rejection of the status quo, but whatever we can do to cultivate those capacities in any form of learning process goes towards increasing the range of what we might yet dream, invent or understand and what we might do to survive and adapt to our collective mistakes.