Academia: I For One Do Not Welcome My Robot Overlords (Packback Edition)

Thursday's Child Has Far to Go

I’m coming from a privileged situation in terms of faculty working conditions at a wealthy small private liberal-arts school. My classes are small—the largest I’ve taught in almost thirty years was just shy of forty students—and I have a reasonable teaching load (two classes per semester). My students arrive with strong skills and have access to a wide range of support services in addition to faculty (peer advisors, student life professionals, etc.)

So it is in some sense easy for me to throw shade on a platform product like Packback. I don’t have to use it, nobody is likely to ever try to make me use it. I want to be fair to the folks providing testimonials on Packback’s pages, because they’re working in very different circumstances than I am. Some of them are contingent faculty. Almost all of them are teaching to large classes that have gotten steadily larger because that’s the labor model at their institutions—fewer faculty teaching more students under more stressed conditions (even in pre-pandemic terms, with things being even more challenging in a hybrid or wholly online contexts). In many cases, they’re under more top-down pressure than ever to improve student performance across a variety of metrics, pressure coming from more and more remote and corporate administrations that have no interest in faculty professionalism or aspirations.

Many of those testifying to Packback’s usefulness understand that these circumstances make it very hard to teach effectively. We know that it makes a huge difference to students to be directly engaged as individuals and to receive feedback for their work as quickly as possible. If you’re trying to deal with a 400-person class, whether in Zoom or in a physical classroom, you just can’t do that as one faculty member. You can’t do it all that well even if you have teaching assistants.

That’s what the people behind Packback know as well. They’re part of the long history of “teaching machines”, as Audrey Watters has termed them, technologies that remove the need for educational institutions and educational professionals by catalyzing self-learning.

What exactly does Packback do? Well, in a somewhat familiar way for ed-tech, you can’t get a really clear picture of what the platform actually does from the splash page and even the “case studies” are significantly vague—a lot of positive marketing-speak, soothing and reassuring, but only talking about the product’s use in general terms. Unless you’re willing to sign up for the demo (and all the intrusive marketing that will likely follow), you have to watch a number of YouTube videos demonstrating the product to get a clearer picture.

Basically, Packback’s core product is a discussion forum that bundles some increasingly common AI-supported interactions with human writing along with some other common ed-tech services. You can assign students to participate in the forum every week in the form of a question they have about course material in that week, perhaps followed by their own attempt to answer or comment on that question.

Packback’s AI will then moderate and sort what students did. It will, as per the first screenshot, flag questions for plagiarism and profanity. It can also flag “closed-ended questions” and questions about logistics (e.g., “when is the paper due?” and so on).

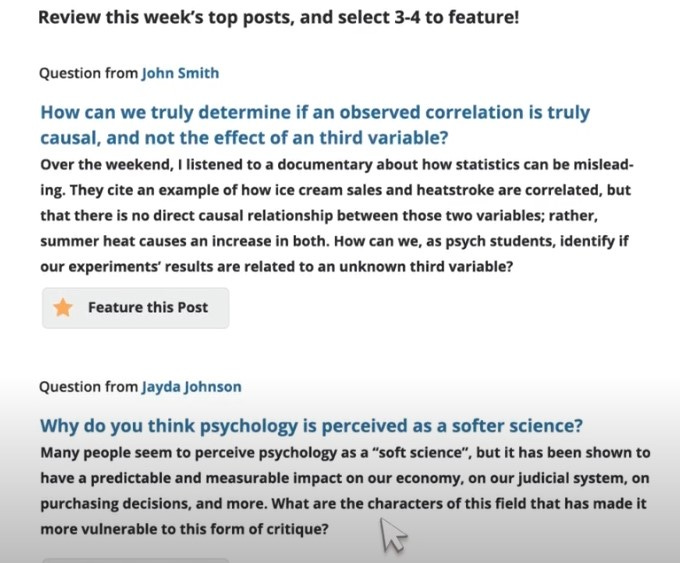

Packback’s designers promise also that the instructor can use a feature that will sort student comments according to quality. Without saying as much, the implication is that if you’ve got a 400 person class where everyone is required to post a question to Packback every week, you can’t possibly read 400 questions in a timely way that frequently, so Packback will make sure that you see the best questions. “Best” here determined by “curiosity score”, “sentence depth”, “post activity”, and “flag/moderation score”.

This is all kind of familiar: moderators who run asynchronous forums with lots of traffic have been trying to perfect similar forms of automation (some using AI, some using community feedback tools). The person who gets their comments flagged or removed frequently gets a downvoting tag or is permanently filtered. The highly active poster with strong contributions is automatically upvoted or moved to the top. Auto-complete features try to show you what other people often say, or help a sentence make grammatical sense. Attempts to automate grading or assessment have often used “sentence depth” metrics used in natural language processing to identify textual richness and textual quality. AI researchers trying to improve performance of software agents playing Atari 2600 games have come up with a “curiosity score” that incentivizes the AI to balance exploration and exploitation of the game environment and it’s not that hard to envision how to use a similar metric to identify posts that are exploring the parameters of the material for a given week. (The simplest way, I think, would be to pick out posts that have a high sentence depth score but that also have the highest number of words not used by any other poster.)

Packback suggests that instructors can then highlight the best and most relevant posts as identified by the AI each week in order to show students what a good question and comment might look like. “If you get too busy”, the video notes, “your digital TA has your back”: it will auto-select the top posts and feature them. You can of course defy your digital TA and elevate a post that it didn’t identify, but then we’re back to “400 posts every week” problem.

You do still have a role as a human being with professional qualifications, never fear! You can offer specific praise on the best posts that appears publically and extends your pedagogy by telling students what makes the best posts exemplary. “All the students can see you are present in the discussion”. You can also take a post that you’d like everybody to be responding to in their own questions and pin it to the top of the discussion—another standard asynchronous forum management tool.

That could in fact be a way to lower your blood pressure and not get too worried about the product. Arguably, Packback is just a teaching machine for faculty, not so much for students, meaning that it is providing a soft, friendly introduction to how to manage an asynchronous forum in the pandemic moment when many faculty have needed to do that for the first time. People who have long experience with asynchronous discussions (I graduated in the class of Usenet and GEnie’s Science Fiction Roundtable) may not need the conceptual help and may be more practiced with how to scan through a very large content space to spot the signal amid the noise.

Nevertheless, I worry. Some ed-tech is pure snake oil and is in some sense easily dismissed. Some ed-tech is hyped by people who are invested in it who recognize that “creatively destroying” existing educational institutions is key to getting their payout. So far that’s failed. I don’t think Packback is either of those things but it’s not hard to imagine it becoming a tool for the destroyers to use—or a tool that destroys without anyone meaning to do that.

Like a lot of Big Tech’s products, Packback is essentially a way to avoid doing something important that our society is presently unprepared to do. The answer to a 400-person class taught by an adjunct who doesn’t have the time, support or compensation to teach to the diverse range of different learners in their actual classroom should be “hire more people under better working conditions, make classes smaller, and provide professional support from trained people for students coming from a variety of preparations or backgrounds”. AI in these applications becomes a way to cut corners and make more revenue flow to owners or managers rather than to workers.

It’s the same with the way that social media leans on AI to filter content and apply standards rather than hire enough well-trained editors and monitors who increase the quality of contributions and relentlessly prevent abusive or false material from appearing on the platform. At truly mass scales, AI can never do that job, no matter how much it gets trained in the endless ouroborous of “read the last corpora in order to shape the next corpora to look like the last one”. Even as AI-mediated cyborgs, humans retain sufficient agency and organic cunning to find where the algorithm stops working—and to find out who the algorithm targets and who it ignores because that’s what the purely human-created text it was trained on did too.

Let’s say Packback, or some close imitator or successor, really takes hold. It’s easy to see the next steps that will be offered sincerely as feature advancements. You’ll be able to toggle it so it starts writing praise for you when you’re really busy! It’ll start auto-grading students for their discussion participation, no need for you to micromanage it. It’ll start pulling relevant citations or links into the discussion for students to look at. It’ll start offering simple explanations of important concepts pulled from Wikipedia and so on. (That’s already out there in the world in other platforms or ed-tech.)

Then the next steps: it won’t need a single instructor to supervise it. We’ll be able to assemble a class with ten lectures from ten different faculty and attach a Packback backend for discussion of the material. Maybe we’ll assign an instructional technologist to make sure Packback discussions are working as intended, but they won’t need to really know the content. Maybe we’ll hire a content manager who knows a little about the relevant material, but just enough to make sure the AI’s training is enough on target that it isn’t embarrassing. Maybe we’ll be able to get expensive content creators out of the mix entirely, or just pay them a one-time fee for their work and wish them well.

This isn’t slippery slope stuff—it’s what the last two rounds or so of massively-online courses from various purveyors were trying to do, connect content from qualified professionals on the front end in the form of recorded or live lectures to text-based asynchronous and synchronous interfaces for interactions between students and between faculty and students. They mostly haven’t succeeded, partly because the intrinsic motivation needed to self-teach even with an assistive interface wasn’t there for most of those courses, but also because at the scale those courses were offered, the tools just couldn’t do enough and the teachers couldn’t possibly substitute for the tools. The desire to make this work very much remains—as Watters observes in her book, it has a deep and intricate history.

Tech in its present form, however benevolent or well-intentioned its designers, does not stop at boundaries of the advisable or the possible. I can’t expect that fellow professionals who are being put in working situations that they cannot plausibly handle through their own trained labor would resist anything that helps them do something they know their students need (to ask questions that get answered, to discuss and ponder material together, to sharpen their skills with using and refining what they’re learning). And I can’t imagine that we can ask people not to make tools that will almost certainly be pushed at some point beyond what they can or should be used to do, pushing well-trained human professionals out of the labor that they once did well and could keep doing well into the future if we could only decide to invest in quality everywhere rather than a relentless desire to pay for little and accumulate everything in a smaller and smaller number of pockets.

I can hope, though. Hope that we all can see where what we’re being offered could be used to end our profession altogether. Hope that we can all see where we—designers, professionals, leaders—have to have a conversation about AI and put limits on its uses and abuses. Hope that we can end up in agreement that if we really want people to learn there’s no alternative to having trained professionals who know the material involved in deeply human and present ways.

Image credit: Photo by Maximalfocus on Unsplash